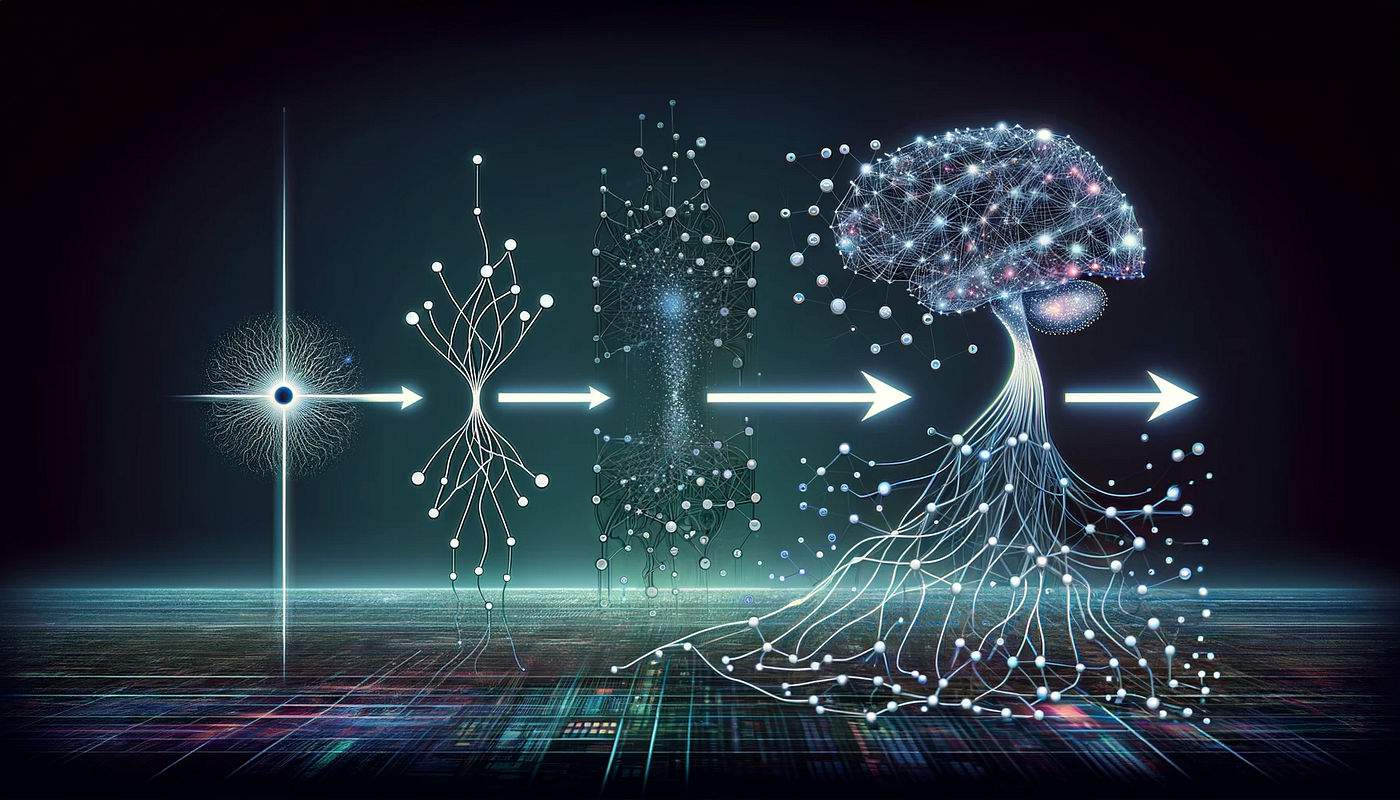

The Evolution of Neural Networks

The journey of neural networks is a fascinating tale of innovation and progression. From their humble beginnings in the 1950s to the cutting-edge architectures of today, neural networks have reshaped the landscape of artificial intelligence. As we explore this story, we uncover how each milestone has contributed to the myriad applications we see today.

One of the most significant early milestones was the creation of the Perceptron in 1958 by Frank Rosenblatt. This model mimicked the way human neurons work, enabling the machine to learn from inputs and make decisions based on that learning. Although it was limited in scope, particularly in its inability to solve non-linear problems, the Perceptron laid the groundwork for future advancements in neural computation, sparking interest in artificial intelligence.

The 1980s brought the introduction of Multi-Layer Perceptrons (MLP). MLPs incorporated multiple layers of neurons, enabling networks to learn complex, non-linear relationships within data. This was a game-changer, allowing for breakthroughs in various fields, including speech recognition and complex pattern recognition, ultimately paving the way for more sophisticated models.

Moving to the 1990s, Convolutional Neural Networks (CNNs) emerged, primarily focusing on image processing tasks. CNNs utilize convolutional layers that analyze visual data in a hierarchical manner. This architecture dramatically improved the performance of image classification tasks, leading to advancements in facial recognition technology that are now commonplace in social media platforms and security applications.

In the realm of sequence prediction and natural language processing, Recurrent Neural Networks (RNNs) surfaced as a powerful tool. RNNs possess the unique ability to utilize information from previous data points, making them particularly effective for tasks like language modeling and translating text. They have transformed how we interact with technology, from predictive text to virtual assistants.

The introduction of Transformers marked a revolutionary shift in language models, emphasizing self-attention mechanisms that allow for understanding context and generating human-like text. This architecture underpins popular models like OpenAI’s GPT series, fostering advancements in content creation, coding assistance, and conversational AI.

These advancements not only reflect the technological progress of machine learning but also highlight the increasing complexity of tasks that neural networks can handle. The implications of these technologies are vast, impacting sectors as diverse as healthcare, where they assist in diagnosing diseases, and finance, where they excel in fraud detection and risk assessment.

As we delve deeper into this article, we will explore not just the evolution but also the key features and differences between these remarkable neural network types. The interplay of theory and technology has propelled us into an era rich with possibilities, and understanding these systems will be crucial for anyone looking to engage with the future of artificial intelligence.

LEARN MORE: Click here to discover the impact of AI in smart cities

From Simple Models to Complex Systems

The journey from the Perceptron to modern architectures is not merely a tale of technological enhancements; it reflects the dynamic evolution of our understanding of learning and intelligence. In the early 1960s, Frank Rosenblatt’s concept of the Perceptron introduced the idea that machines could learn. This sparked a wave of interest in neural networks, but the next significant leap would take decades to materialize.

The 1980s witnessed a renaissance in neural networks with the advent of Multi-Layer Perceptrons (MLPs). These networks featured multiple layers of neurons, interconnected in such a way that they could capture intricate relationships within datasets. MLPs function through the application of activation functions, allowing for the modeling of non-linear mappings. This breakthrough facilitated a surge in applications like speech recognition, where systems began to accurately transcribe audio input into text, revolutionizing industries reliant on voice technology.

As the field progressed, the 1990s brought forth the emergence of Convolutional Neural Networks (CNNs), specifically designed for processing grid-like data such as images. CNNs innovatively incorporate convolutional layers that apply filters to detect and focus on various features, such as edges and textures, within an image. This hierarchical architecture significantly improved tasks such as image classification and object detection. As a result, CNNs have become the backbone of applications in numerous domains, including:

- Facial recognition in social media platforms

- Autonomous driving systems capable of identifying pedestrians and road signs

- Medical imaging where they aid radiologists in diagnosing conditions from CT scans and MRIs

Meanwhile, the advent of Recurrent Neural Networks (RNNs) around the same period introduced new possibilities for sequence prediction and tasks involving temporal data. By maintaining memory through internal states, RNNs could analyze previous inputs, making them especially effective for tasks such as language translation and time series forecasting. This temporal capability has enabled technologies such as predictive text input and music generation systems, showcasing the versatility of RNNs.

Fast forward to recent years, the introduction of Transformers has revolutionized the way we approach natural language processing and understanding. This architecture employs self-attention mechanisms that allow models to weigh the significance of different parts of an input sequence, leading to improved context comprehension. Transformers power popular applications like OpenAI’s GPT series, which have democratized content generation and advanced interactive AI systems.

The rapid evolution of these architectures has not only underscored their technological sophistication but also established neural networks as pivotal tools in an array of fields from healthcare to finance. As we continue this exploration, we will delve into the unique attributes of each architecture, elucidating how these developments have fostered an era teeming with potential in artificial intelligence.

Neural networks, a subset of machine learning, have undergone remarkable transformations since their inception. The pivotal development of the Perceptron in the late 1950s marked the beginning of this evolution, laying the groundwork for future architectures. Developed by Frank Rosenblatt, the Perceptron was designed to mimic the human brain’s basic functions by processing input data to produce binary outcomes. Though limited in its capabilities, the simplicity of the Perceptron sparked further research and led to the inception of multi-layer neural networks.As research progressed through the 1980s and 1990s, innovations such as backpropagation enabled deeper networks that could learn more complex functions. This period also saw the introduction of diverse activation functions, which enhanced the networks’ ability to tackle non-linear problems. The emergence of the Recurrent Neural Network (RNN) and Convolutional Neural Network (CNN) architectures revolutionized fields such as natural language processing and computer vision, respectively. RNNs allowed for the processing of sequential data, while CNNs effectively recognized spatial hierarchies in images.The transition to modern architectures in the 21st century has witnessed the incorporation of deep learning techniques, utilizing layers upon layers of neurons. This development facilitated breakthroughs in performance across numerous applications, including speech recognition, autonomous vehicles, and even artistic endeavors in generative art. Techniques such as transfer learning and reinforcement learning have further propelled the capabilities of neural networks, allowing them to learn from smaller datasets and adapt to new environments efficiently.Moreover, advancements in hardware, particularly the widespread adoption of GPUs for training complex models, have significantly reduced the time required to train sophisticated neural networks. The synergy between evolving algorithms and powerful computational resources continues to challenge the limits set by traditional machine learning methods, promising even greater strides in artificial intelligence.As we delve deeper into the subject, it’s essential to explore the nuanced advancements in architecture design, such as the ResNet and Transformer models. These innovations are redefining the landscape, proving instrumental in improving learning strategies and achieving state-of-the-art results across various benchmarks. The journey from the Perceptron to current architectures illustrates not just a technological evolution but also a growing understanding of how to harness the power of artificial intelligence for diverse, real-world applications. This path forward invites further inquiry and exploration into the vast capabilities of neural networks and their impact on our future.

DISCOVER MORE: Click here to delve deeper

Breaking New Ground: The Rise of Specialized Architectures

As the landscape of neural networks continues to evolve, a penchant for specialization has emerged, giving birth to numerous novel architectures that address the unique demands of specific applications. This trend is particularly evident with the introduction of Generative Adversarial Networks (GANs) in 2014 by Ian Goodfellow and his colleagues. GANs operate on a dual-framework where two neural networks—a generator and a discriminator—compete against each other, leading to the production of extraordinarily realistic synthetic data. This architecture has been instrumental in several breakthrough applications, from creating high-resolution images to generating deepfake videos that challenge our perceptions of reality.

Moreover, the surge of interest in GANs has been prominently visible in creative fields. Artists and designers are utilizing GANs to support their work, resulting in fascinating collaborations where machine-generated art is showcased alongside traditional pieces. The potential for GANs transcends visual media, extending into music composition and even fashion design, illustrating their versatility and the versatile role neural networks now occupy in both culture and technology.

In parallel, the rise of Attention Mechanisms has played a crucial role in reshaping the way neural networks engage with data. Originating from the need to enable models to focus on relevant parts of their input, attention mechanisms have proven to be vital in enhancing the performance of architectures across various domains. Alongside Transformers, attention mechanisms have been integrated into CNNs and RNNs, augmenting their capabilities in tasks such as machine translation and image captioning, allowing for a more comprehensive understanding of context.

As large language models like BERT (Bidirectional Encoder Representations from Transformers) made headlines for their superior performance on numerous natural language processing tasks, they showcased the power of pre-trained models that could be fine-tuned for specific scenarios with relatively small amounts of data. This innovation is a game-changer for industries like customer service, where chatbots are becoming more adept at handling inquiries due to their improved understanding of language nuances.

Simultaneously, the burgeoning field of Neural Architecture Search (NAS) is changing the way we think about designing neural networks altogether. By leveraging algorithms to automate the design process, researchers are finding optimal network structures that can outperform human-created architectures, thus taking efficiency and performance to new heights. This practice assists in crafting models that are not only more powerful but also tailored to work efficiently under various constraints, such as limited computational resources or specific application requirements.

Lastly, the fusion of neural networks with other machine learning paradigms, such as reinforcement learning, signifies a critical advancement in training strategies. This combination allows neural networks to learn in dynamic environments—akin to how humans learn through interactions and experiences. Applications in robotics, game design, and personalized user experiences are a testament to the potential of these hybrid approaches to revolutionize various industries.

The relentless pace of innovation within the neural network domain—marked by specialized architectures, attention mechanisms, and novel training methodologies—sets the stage for a future where the boundaries of artificial intelligence are pushed further. As researchers unlock new methodologies and refine existing models, the next chapters in the evolution of neural networks are poised to unleash unprecedented advancements across countless sectors, making AI more adaptable and integral to our everyday lives.

DISCOVER MORE: Click here to delve deeper

Conclusion: A New Era for Neural Networks

The journey of neural networks from the simple perceptron model to today’s sophisticated architectures demonstrates a remarkable evolution driven by creativity, innovation, and necessity. As we’ve explored, the introduction of specialized structures such as Generative Adversarial Networks (GANs) and the integration of attention mechanisms have reshaped our understanding of what AI can achieve. These advancements not only enhance the performance of neural networks in various applications—ranging from image generation to natural language processing—but also expand their potential in transformative fields such as art, music, and even customer service.

Moreover, the emergence of Neural Architecture Search (NAS) illustrates how automation is paving the way for groundbreaking innovations, optimizing network designs that were once reliant on human intuition and expertise. The synergy of neural networks with other cutting-edge methodologies, such as reinforcement learning, further amplifies their adaptability and effectiveness in real-world scenarios.

As we look to the future, the implications are vast. New discoveries in neural network design and deployment will undoubtedly propel advancements in technology, healthcare, robotics, and beyond. As machines evolve to better understand and interact with the world, we stand on the threshold of an era where complex tasks become more efficient and intuitive. The evolution of neural networks not only underscores the incredible potential of artificial intelligence but also invites us to consider the ethical and practical implications of this profound transformation in our everyday lives. With ongoing research and a growing appetite for innovation, the journey of neural networks is far from over; it is, in many ways, just beginning. The exciting possibilities that lie ahead will continue to inspire curiosity and drive exploration as we navigate this remarkable frontier.