Understanding the Intersection of Data Analysis and AI

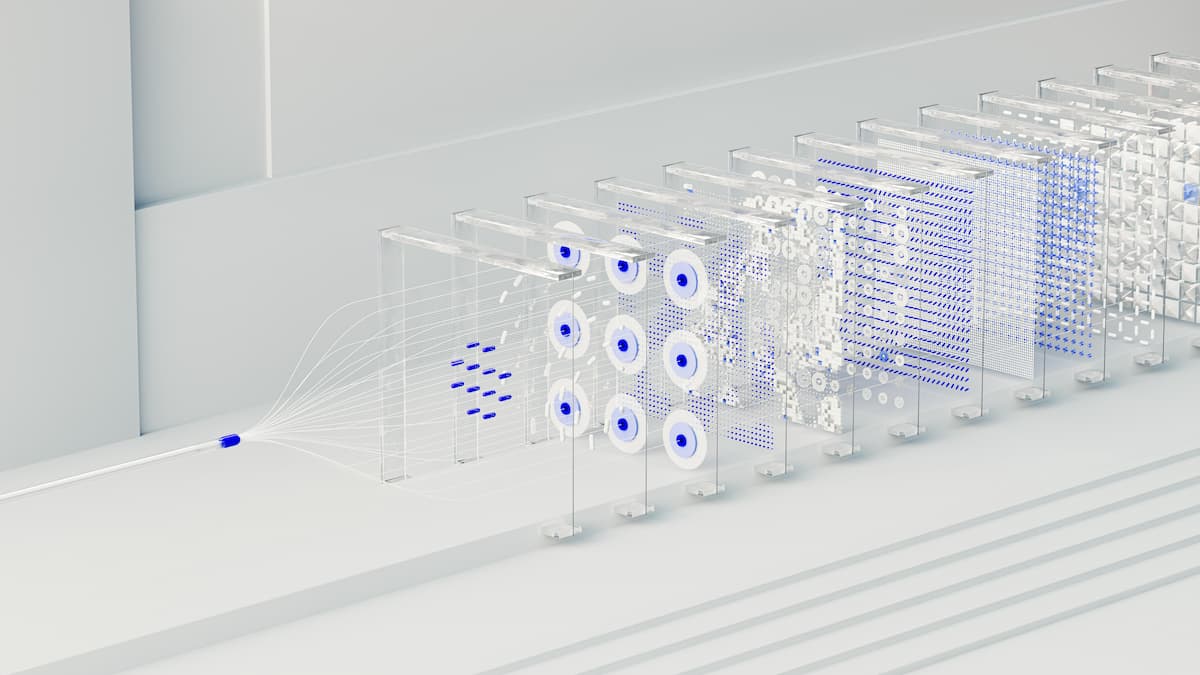

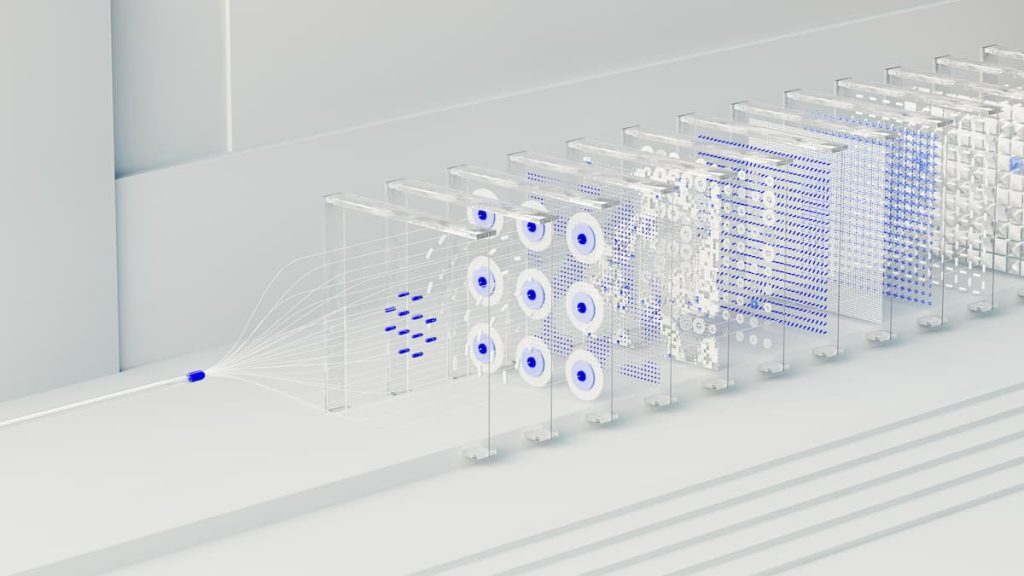

The digital revolution has ushered in an era where data is a precious commodity. Every interaction online generates vast amounts of information. This wealth of data is essential in analyzing patterns, driving decisions, and creating advanced technologies like Explainable Artificial Intelligence (XAI). In this rapidly evolving landscape, companies are transforming raw data into actionable insights, thereby enhancing operational efficiencies and customer experiences.

Why Explainable AI Matters

As we integrate AI into various industries, the need to make these complex systems understandable is becoming increasingly vital. Consider the implications:

- In healthcare, AI systems can analyze patient histories, predict disease outbreaks, and suggest personalized treatment plans. However, transparency in these predictions is essential for medical professionals to trust and act upon them effectively.

- Financial institutions rely heavily on AI for risk assessment and credit scoring. The implications of these automated decisions affect consumers’ financial futures, where transparency is not just an expectation but a regulatory requirement.

- In the automotive sector, autonomous vehicles must make real-time decisions to navigate safely. When these decisions can be explained clearly to both passengers and regulators, it bolsters public confidence in the technology.

These examples highlight the necessity of developing powerful algorithms while ensuring that their operations and decisions are transparent. Explainable AI bridges the gap between intricate algorithms and human comprehension, fostering trust and accountability. Studies have shown that when users understand how AI reaches conclusions, they are more likely to embrace the technology. For instance, a survey from Accenture found that 84% of respondents feel AI must be trustworthy to be effective.

The Role of Data Analysis

Effective data analysis techniques play a pivotal role in evolving AI systems. Key components include:

- Identifying trends within massive datasets for better predictive capabilities, such as spotting consumer buying patterns that can help businesses tailor their marketing strategies.

- Utilizing machine learning algorithms to enhance decision-making processes across sectors—from optimizing supply chain logistics to personalizing customer experiences online.

- Implementing visualization tools that allow stakeholders to interpret complex data outputs easily, transforming statistical information into intuitive formats, like interactive dashboards or infographics.

As we delve deeper into this transformative journey, the synergy between data analysis and Explainable AI promises innovation and ethical advancements in technology. This exploration not only illuminates current practices but also sets the stage for future developments. Major tech companies, including Google and IBM, are investing heavily in research related to XAI, recognizing that to sustain public trust in AI technologies, these systems must be understandable and transparent.

In conclusion, understanding the intersection of data analysis and AI is critical for ensuring these powerful tools serve humanity effectively and ethically. As the landscape continues to evolve, ongoing dialogue and research are essential in harnessing the full potential of these technological advancements for a better future.

DISCOVER MORE: Click here to delve into the ethics of AI text generation

The Critical Contribution of Data Analysis in Enhancing XAI

Data analysis serves as the backbone of Explainable Artificial Intelligence (XAI), providing the foundation upon which transparency and comprehension can thrive. As organizations amass reams of digital information, the skillful application of data analysis techniques transforms this raw data into meaningful insights. This layering of technology not only enhances artificial intelligence systems but also addresses the essential need for clarity in AI operations, fostering greater adoption and trust in these technologies.

The importance of data analysis in the evolution of XAI lies in its capability to uncover invaluable insights and improve user experiences. Here are the critical aspects driving this evolution:

- Data Preprocessing: Before any analyses can begin, data must be cleaned, organized, and processed. This initial step is crucial as it ensures that the datasets used for creating AI models are free of biases and inaccuracies. Faulty data can lead to misleading conclusions and undermine the trustworthiness of AI outputs.

- Feature Selection: Identifying the right features—variables or attributes that are influential in predicting outcomes—is paramount in creating effective AI systems. Through sophisticated analysis, data scientists can pinpoint which features matter most, thereby streamlining the model and enhancing interpretability.

- Model Testing and Validation: Analyzing the performance of various AI models is essential for determining the most effective algorithms for specific tasks. This phase often involves metrics that evaluate model explainability, ensuring that stakeholders can grasp the decision-making processes of the AI systems.

Businesses that emphasize these aspects of data analysis stand to gain significant advantages in ensuring the accountability and reliability of their AI models. For instance, organizations such as Microsoft and Facebook are continuously tweaking their algorithms based on data-driven insights to make their AI operations more understandable to users and regulators alike.

The melding of data analysis with AI is not just a trend but a necessity in a data-saturated world. As the volume of data grows—Intel estimates that 463 exabytes of data will be created each day by 2025—the complexity of AI systems will also expand. This requirement emphasizes the urgency of implementing robust data analysis methodologies that prioritize explainability.

Furthermore, regulatory considerations are influencing the trajectory of XAI. For example, the European Union’s General Data Protection Regulation (GDPR) emphasizes the right to explanation, mandating that users be informed about the logic involved in automated decision-making systems. This legal framework amplifies the need for companies to adopt transparent data analysis practices to comply and avoid potential legal repercussions.

As we venture deeper into the implications of data analysis on the development of XAI, it becomes clear that the synergy between these two fields is crucial for achieving responsible and ethical AI systems. The fusion of data insights with AI not only enhances operational efficiency but also cultivates a culture of trust between consumers and technology.

| Advantage | Description |

|---|---|

| Enhanced Decision-Making | Data analysis allows organizations to interpret large datasets, resulting in informed decisions. |

| Transparency in AI Models | Explainable Artificial Intelligence enables stakeholders to understand AI decisions, fostering trust. |

| Predictive Analytics | Using historical data to forecast outcomes improves strategic planning. |

| Regulatory Compliance | AI that can explain its decisions aids in meeting legal requirements. |

Data analysis plays a critical role in evolving explainable artificial intelligence by offering extensive insights into how these systems function. It facilitates organizations to develop models that not only predict outcomes but also provide clarity on decision-making processes. Furthermore, as the demand for accountability in AI grows, so does the importance of making AI systems interpretable. This transparency is key in building public trust and ensuring that these technologies can be effectively integrated into society. Emerging frameworks harnessing data-driven approaches are proving invaluable as they bring forth the evolution of these explainable systems, illustrating the potential for widespread adoption across various sectors. With advancements continuing to reshape the landscape, organizations are encouraged to delve deeper into the interactions between data analysis and explainable AI, as these developments pave the way for enhanced operational efficiency and ethical AI deployment.

DIVE DEEPER: Click here to explore the impact of machine learning on health innovations

Bridging the Gap: Ensuring Interpretability in AI through Robust Data Analysis

In this age of rapid technological advancement, the intersection of data analysis and Explainable Artificial Intelligence (XAI) unveils a myriad of intriguing opportunities for enhancing AI transparency. One critical aspect is the role of model interpretability. As AI models become increasingly sophisticated, the challenge of decoding their decision-making processes is magnified. Data analysis acts as a pivotal player in rendering these processes accessible, thus promoting a smoother integration of AI systems into everyday applications.

One effective tool in the realm of data analysis is the use of visualization techniques. Visual representations of complex data can significantly aid in elucidating the underlying workings of AI models. Techniques such as heatmaps, decision trees, and SHAP (SHapley Additive exPlanations) values provide stakeholders with intuitive insights, allowing them to grasp how specific variables influence model predictions. For instance, a financial institution leveraging a machine learning model to assess credit risk can visualize feature impacts, thus shedding light on why certain individuals receive approval or rejection.

Furthermore, the iterative collaboration between data scientists and domain experts is essential in refining the accuracy and explainability of AI outputs. Cross-disciplinary teams that include not just computer scientists but also industry professionals can harness data analysis in developing contextualized interpretations of AI behavior. For example, healthcare providers using AI to determine patient treatment paths can work closely with data analysts to ensure that the model’s predictions align with clinical guidelines and medical ethics. This partnership not only fosters a more transparent process but ultimately enhances patient care through informed decision-making.

The evolution of XAI is also significantly influenced by the growing landscape of AI ethics, where issues surrounding accountability and bias come to the forefront. Through rigorous data analysis, organizations can identify and mitigate biases in their AI models, striving for fairness in their predictions and recommendations. By employing statistical techniques such as fairness-aware algorithms, companies can ensure that their AI solutions do not perpetuate existing disparities. As a case in point, companies like Google and IBM have developed toolkits that utilize data analysis to assess and address bias in AI systems, positioning them as leaders in ethical AI practices.

Moreover, the advent of automated machine learning (AutoML) tools emphasizes the relationship between data analysis and AI interpretability. AutoML platforms leverage data-driven insights to automate model selection and tuning while maintaining an eye on interpretability metrics. This democratization of AI development enables smaller organizations to harness powerful tools without the need for extensive expertise, ultimately broadening the accessibility of explainable models across various industries.

The accelerating pace of innovation within data analysis and its implications for XAI suggests that we are at a crucial juncture. As AI systems evolve to handle increasingly complex tasks, the role of data analysis in ensuring that these advancements remain transparent and comprehensible cannot be overstated. Organizations that prioritize explainability in their AI developments will not only improve user trust and satisfaction but also pave the way for regulatory compliance, ultimately guaranteeing that AI’s benefits are both effective and equitable.

DISCOVER MORE: Click here to delve deeper

Conclusion: The Future of Data Analysis and Explainable AI

As we look to the future, the synergy between data analysis and Explainable Artificial Intelligence (XAI) promises to reshape how organizations harness the power of AI. The growing emphasis on model interpretability is not just a trend; it is a pivotal necessity for fostering trust and accountability in AI systems. With advancements in visualization techniques and collaborative strategies, stakeholders can now navigate the complexities of AI decision-making with greater clarity.

Furthermore, the pressing need for ethical AI development has elevated the role of data analysis in identifying and mitigating biases. Organizations are beginning to realize that the pursuit of fairness is not merely a compliance requirement but a moral imperative that enhances brand credibility and public trust. By adopting fairness-aware algorithms, companies can ensure that their AI models reflect an equitable approach, addressing societal disparities rather than perpetuating them.

The advent of automated machine learning (AutoML) is democratizing access to powerful AI tools, allowing even smaller entities to engage with explainable models. This democratization opens the door for innovative applications across diverse sectors, from healthcare to finance, leading to smarter, data-driven decisions that benefit society as a whole.

In conclusion, the trajectory of data analysis intertwined with the evolution of XAI sets the stage for a new era in AI development. By prioritizing transparency, ethics, and collaboration, organizations can ensure that their AI initiatives are not only advanced but also just and accountable. As we move forward, embracing these principles will be key to unlocking the full potential of AI technology while maintaining societal trust and integrity.